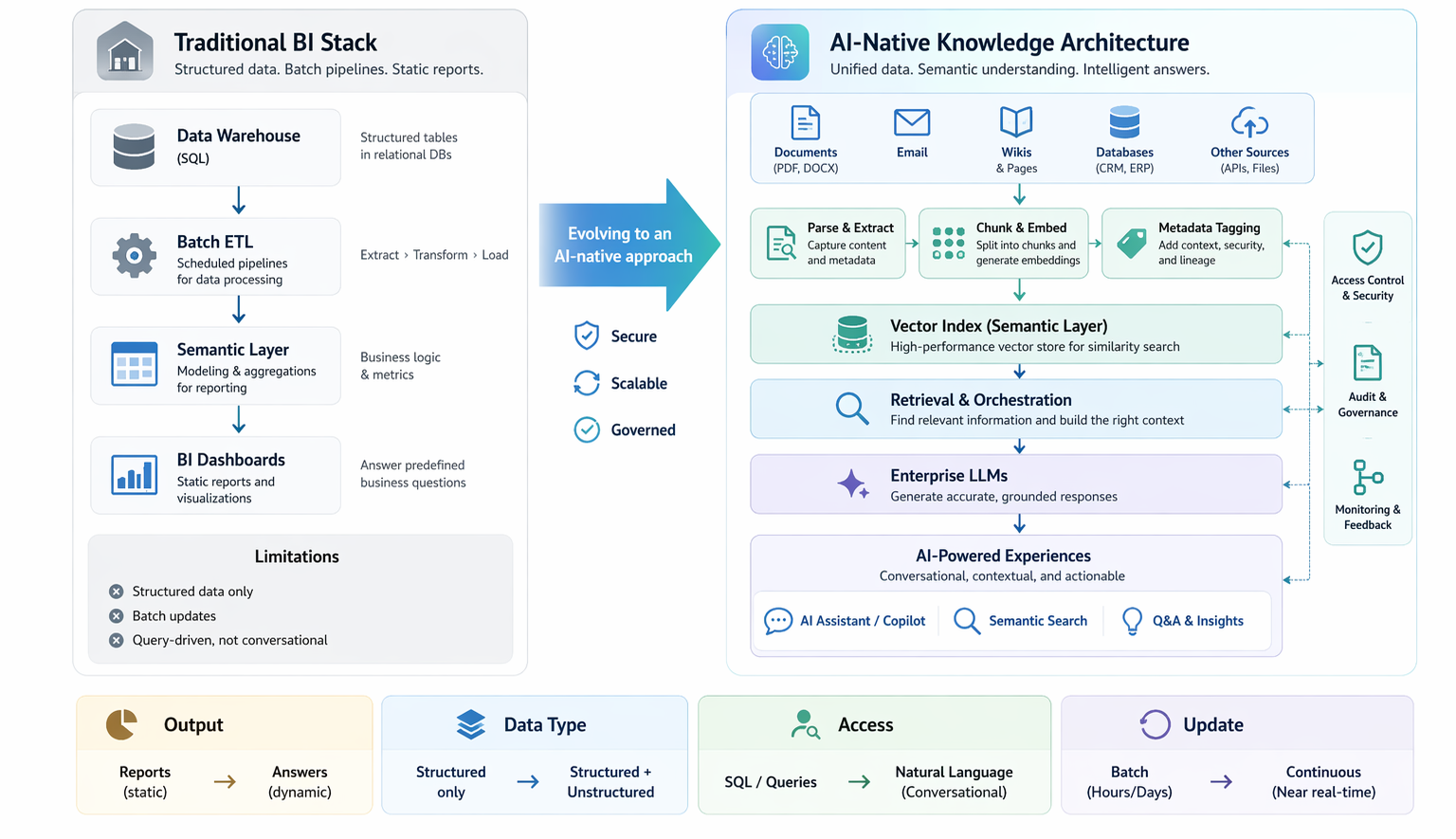

Many enterprises still design their AI data strategies as though each major system type will remain independent. One group works on LLM retrieval. Another builds vision pipelines. Another explores agents. But by 2026, this separation is becoming increasingly expensive. Enterprise value is moving toward systems where LLMs, agents, and vision AI interact inside the same business workflows.

The first reason this matters is that these systems consume different kinds of truth. LLMs rely heavily on knowledge structure. Agents depend on workflow state and permissions. Vision AI depends on visual evidence and spatial context.

This becomes especially visible in real workflows. A field issue may be detected visually, interpreted against a spatial context, described through a knowledge layer, and then passed into an agentic process for routing or action. That single business chain involves vision, documents, workflow state, and often location.

This is why an enterprise data strategy across LLMs, agents, and vision AI must begin with shared reference structures rather than tool-specific silos.