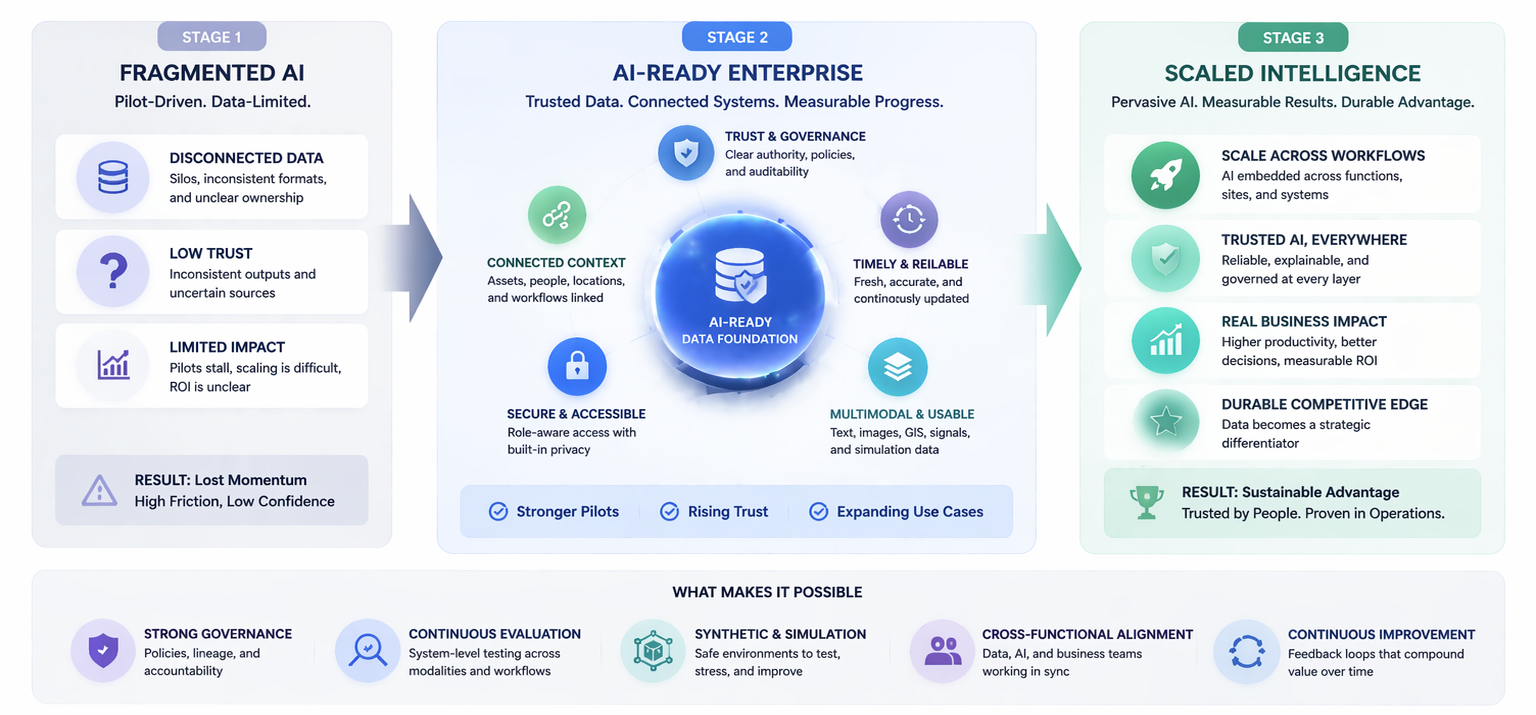

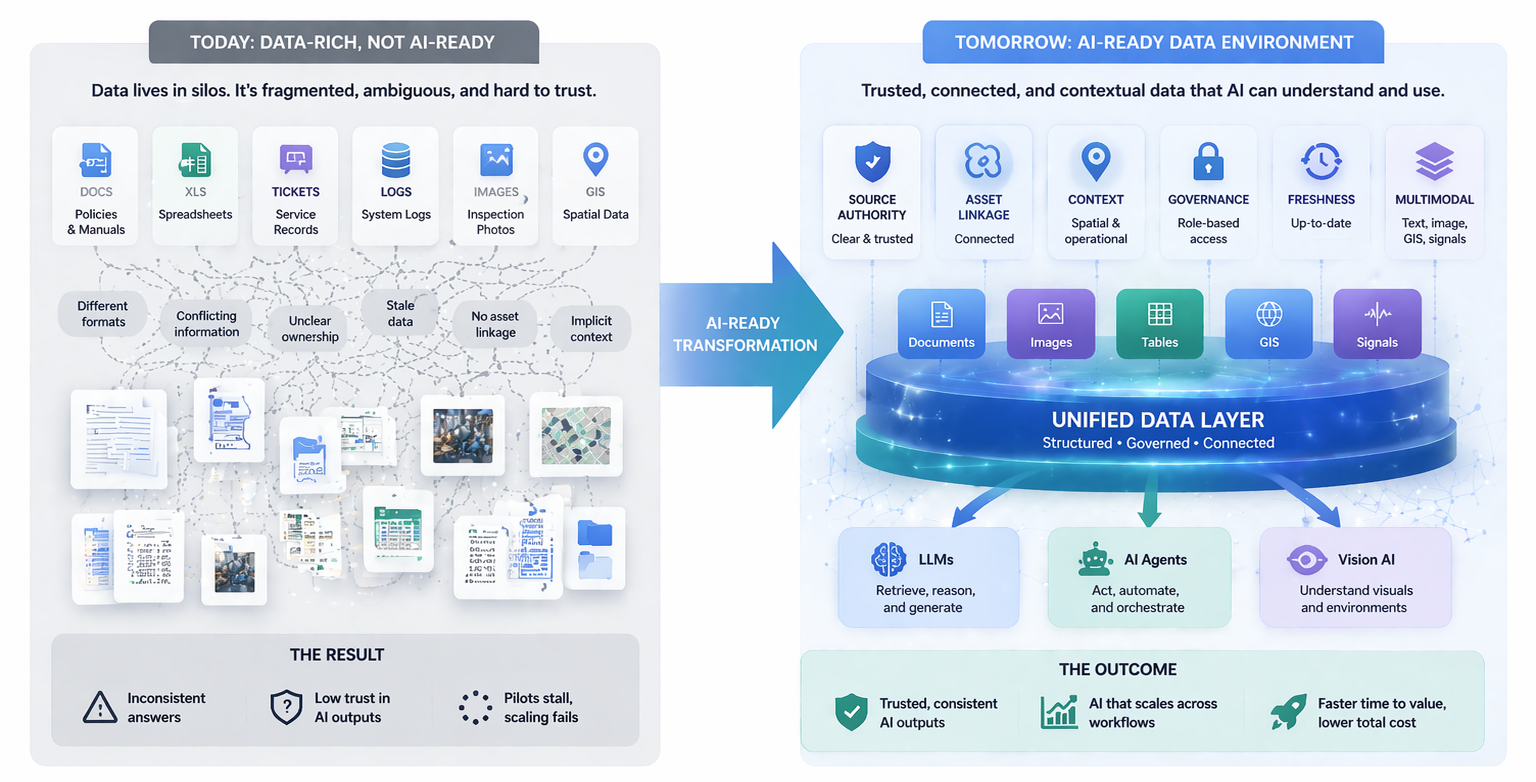

For many years, enterprise data conversations were dominated by the language of quality. Clean data, accurate data, complete data — these were the goals that data governance programs pursued. Quality matters, and no serious organization should deprioritize it. But as enterprise AI moves into operational settings, a different property is becoming more important: trust.

Data trust is not the same as data quality. A dataset can be technically accurate but lack trust because its provenance is unclear, its update frequency is unknown, or its authority within the organization is contested. Conversely, a dataset that has some imperfections but a clear owner, an understood update process, and a well-documented scope can be more trustworthy than a cleaner dataset whose origins are opaque.

The reason trust matters more than quality in enterprise AI is that AI systems amplify the consequences of untrustworthy data at scale. When a human analyst uses a data source they do not fully trust, they apply judgment to compensate. They cross-reference, they question, they add caveats. AI systems typically do not do this unless specifically designed to. An LLM grounded in untrustworthy data will generate confident-sounding outputs based on uncertain foundations. The resulting errors are often invisible until they cause a problem.

Building data trust requires investment in provenance tracking, ownership clarity, update cadence documentation, and organizational acknowledgment of which data sources are authoritative for which purposes. These investments are often treated as administrative overhead, but in enterprise AI deployments they function as risk controls. Organizations that cannot answer "where did this data come from and who is responsible for it" for each data source feeding their AI systems are carrying unquantified risk.

The shift from quality-centric to trust-centric data governance is not purely conceptual. It changes what governance programs measure, what they prioritize, and what controls they build. Quality metrics measure accuracy and completeness. Trust metrics measure clarity of authority, freshness assurance, and organizational confidence in the source. Both matter, but trust is the property that determines whether AI outputs can be relied upon in operational settings.

Enterprises that build trust-centric data governance programs are finding that AI system reliability improves substantially. Users who understand where information comes from and what its authority level is are better equipped to apply appropriate confidence to AI outputs. That calibration is what makes the difference between AI as a useful tool and AI as a liability.